Personal note: This post has been sitting unpublished for weeks; I fell into the perfectionism trap where it never felt “good enough to post.” I’m leaving for my fellowship @ DARPA today and decided to full send. Hope you like it; I’ll try to get back on track with publishing more frequently.

On May 30th, the Center for AI Safety published a one-sentence statement:

“Mitigating the risk of extinction from A.I. should be a global priority alongside other societal-scale risks, such as pandemics and nuclear war.”

This statement was signed by hundreds of prominent AI researchers and industry leaders, including the heads of OpenAI, DeepMind, and Anthropic, which naturally spawned a flurry of debate and discussion.

Seeing this, a couple of people have asked me to expand on my thinking from my first posts on the topic, where I tried to keep neutral. Getting this right is pretty important, so I’ve been thinking about this a lot, and I’ve come to some conclusions. I want to write this out comprehensively as most of the criticism of AI existentialist discourse has been superficial.1

The super-short version: In my view, the near-term (<20 years) extinction/existential risk from AI is extremely unlikely (1 in 1000 chance, or 0.1%, with medium confidence). The long-term (<100 years) risk is higher than this (1 in 100 chance, or 1%, with very low confidence).2 Estimating the long-term risk more precisely is massively uncertain as it depends on technological developments that have not been invented. Given this, investment into efforts to reduce uncertainty about the long-term risks from AI are very important, especially when those efforts can also help mitigate future significant harms from AI. More aggressive action now could possibly do more harm than good and should be avoided.

The short version:

I think placing the “risk of extinction from AI” in a similar category as pandemics and nuclear war is overstating the threat from AI in the near-term (<20 years), based on what we know today.3 If I had to pick a single “AI” risk to be most concerned about in the near-term, it would be the potential for the misuse of AI systems by bad actors.

However, the risk of extinction from AI is not zero, especially if we extend out to the long-term (<100 years). What is it? I don’t know, and that’s the worrisome part. We have good data on nukes and pandemics, not so much on AI-related catastrophes. My estimate is the near-term (<20 years) extinction risk from AI is extremely unlikely in my view (1 in 1000 chance, or 0.1%, with medium confidence). However, the long-term (<100 years) risk is higher than this (1 in 100 chance, or 1%, with very low confidence).

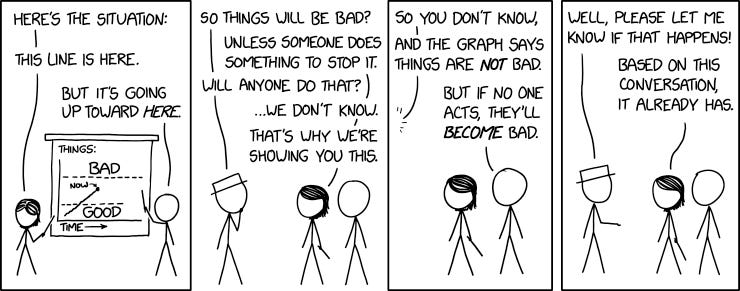

The time thresholds matter because we (the world) have time to determine two key factors - how to reduce the uncertainty about the potential AI risks, and then what should be done about it. If the short-term risks were higher, we’d need to do something concrete now, which is highly risky when there is no expert consensus on what exactly to do. If we tried to start a crash “Manhattan Project for AI Safety", as some have suggested, I’d give it a high chance of being ineffectual, a moderate chance of making the problem worse, and only a small chance of making the problem better.

The potential benefits from broad deployment of AI systems are also considerable. Given this, strong evidence of significant harm from broad deployment will be needed for action.

I would prefer a modified statement that reads something like “Mitigating the risk of significant harm to humans from AI should be a global priority. similar to pandemics or nuclear war.”4 That said, I think the statement in its current form is net positive, likely the best compromise that could be achieved, and I support it.

The rest of this post is a more detailed look at how I arrived at my estimates.

From Zero to Doom

There are whole libraries of arguments one could read on LessWrong about AI alignment. I’ve read a lot of them. That said, the best “general case” explanation for AI risk remains Tim Urban’s Wait but Why 2015 article.5 The article describes the progression of AI from artificial narrow intelligence (ANI), aka AI that can do single, to artificial general intelligence (AGI), or AI that can do multiple tasks. From there, it postulates the concept of artificial superintelligence, or ASI, and discusses how that could threaten humanity. I think this is the best way to frame the discussion, so we’ll start with AGI.

Step 1: AGI

Everyone agrees that GPT-4, while powerful compared to what came before, isn’t taking over the world anytime soon. It’s more than an ANI, but not quite an AGI. Researchers from Microsoft described it as having “sparks of AGI” in a paper in March 2023, which is probably a little hype-y but not outlandishly so.

But what about GPT-5, or -6? Or 10? Or some new system that nobody’s even heard of yet? What are the chances we develop something that has the potential to do the kind of existential things we’re talking about?

Before we go further, we have to define what exactly “AGI” means, as there are two competing definitions:

“Expert” AGI — an AI system that performs on-par with the best-performing humans at a certain set of “important” human tasks.

“Broad” AGI - an AI system that performs at the level of a human across all tasks a human could perform, not just a certain set.

While discussions around the second definition are interesting, I’ll confine this essay to talking about “expert” AGI, as I think that’s the more relevant discussion.

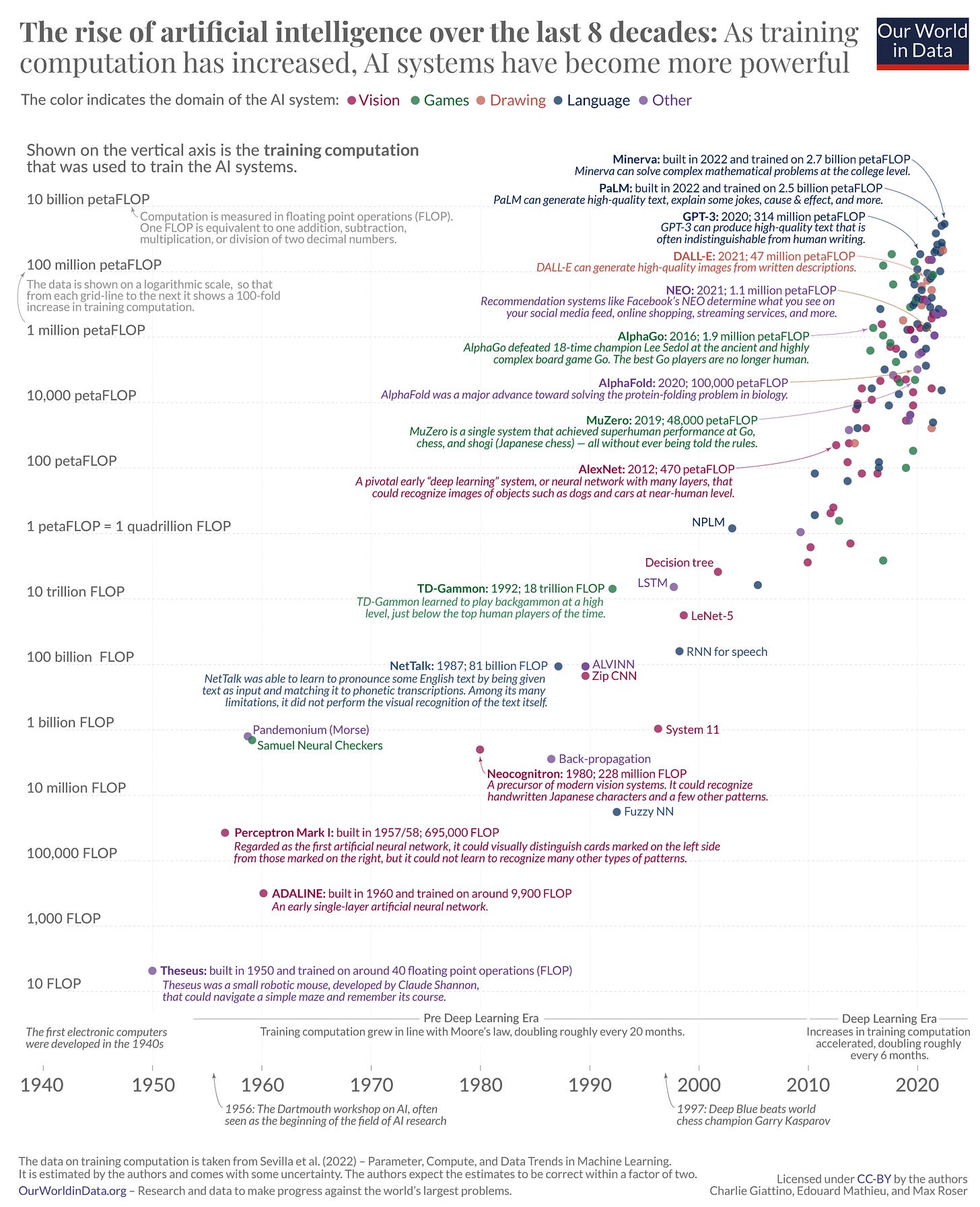

I am 80% certain (medium confidence) we will have AGI in the next 20 years, and 95% certain (low confidence) it will occur in the next 100 years. Why? Primarily, compute scaling.

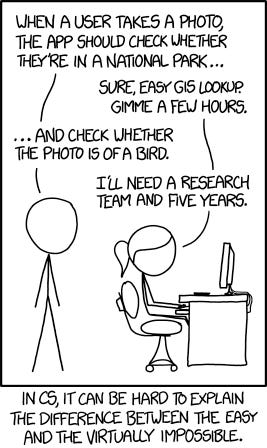

In a previous post, I briefly talked about the AI boom of the 1950’s and 1960’s led to the “AI winter” of the 1970’s once it became evident that fully scaling AI was much harder than expected. For example, Marvin Minsky, the pre-eminent AI researcher of the period, was quoted in 1970 as saying “In three to eight years…we will have a machine with the general intelligence of an average human being.” Oops. It turns out that some tasks are easy for a computer and some are really, really hard.

Hans Moravec crystallized the discussion with a 1976 essay called “The Role of Raw Power in Intelligence.” Here’s a couple of quotes:

“Flight without adequate power to weight ratio is heartbreakingly difficult (vis. Langley's steam powered aircraft or current attempts at man powered flight), whereas with enough power (on good authority!) a shingle will fly."

“Although there are known brute force solutions to most AI problems, current machinery makes their implementation impractical. Instead, we are forced to expend our human resources trying to find computationally less intensive answers, even where there is no evidence that they exist.”

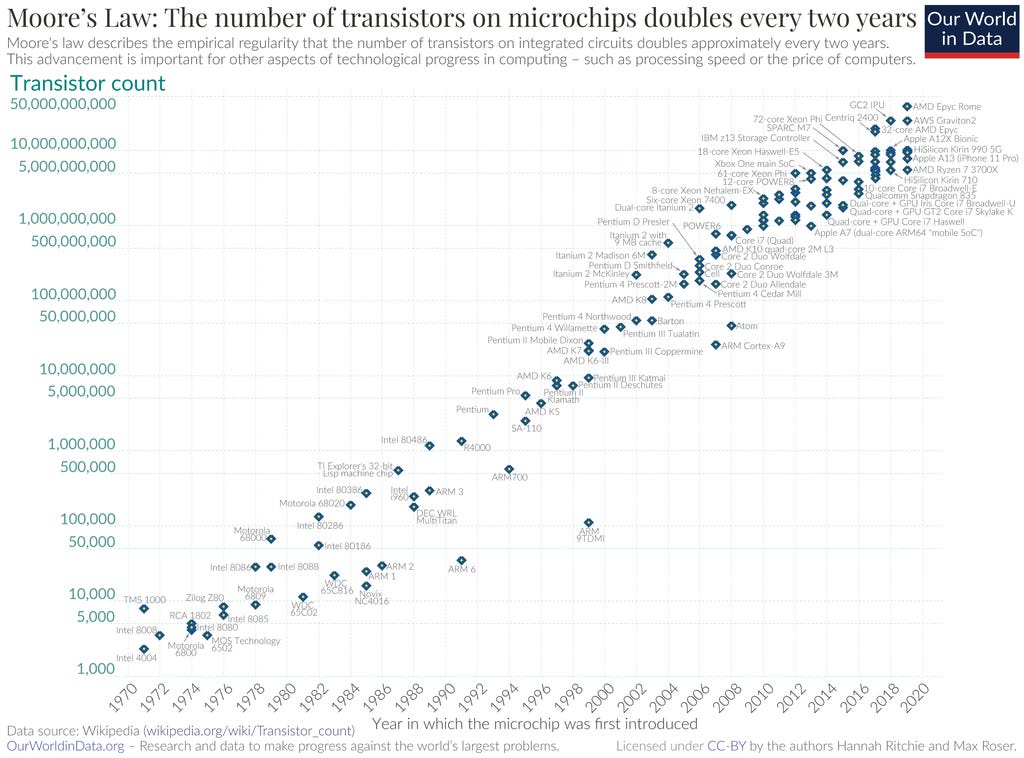

So time passd, and Moore’s Law happened…

Which has led to…

Rich Sutton summed this up in an excellent article in 2019 called “The Bitter Lesson.” It’s short and worth reading in its entirety; in short, the “bitter lesson” is the frustration of the researchers who have spent decades building complex optimization algorithms, only to be overtaken by a much simpler algorithm powered by faster computers.

So, where does this lead us? Sam Altman gave a remarkably candid interview last month that has since been taken down; thankfully, the Internet keeps copies. Per the article, he claims that the scaling laws still hold and that he expects the timeline to AGI to be shorter, not longer. I don’t see a good reason to disagree. It’s possible that Moore’s Law could break down spectacularly, or global instability could dramatically impact chip production. It’s also possible that “Bitter Lesson” ends up not holding up and we hit a point at which more compute doesn’t equal more results. Still, I don’t think these things are very likely.

Step 2: ASI

Okay, so we’ve got an AGI (which I previously described as a “human-level” intelligence). What about going beyond that? Presumably the curve doesn’t just stop at humans? What about superintelligence?

Definition Break: As with AGI, there’s differing definitions of ASI. In Superintelligence, Bostrom defines it as “An intellect that greatly exceeds the cognitive performance of humans in virtually all domains of interest.” Richard Ngo interprets “greatly exceeds” as “greater than the sum of humanity if coordinated globally,” which I can agree with.

Well, yes, actually. This is where I part company with Tim Urban’s article and the Existentialists. My prediction of us achieving ASI is a 1% chance (medium confidence) in the next 20 years, or a 10% chance in the next 100 years (low confidence).

This seems like a tough claim to make considering I just argued the opposite, and I can already hear “Bitter Lesson” whispering in my ear. “Why wouldn’t the same thing continue? Don’t make the same mistake!”

To which I reply…”Plato’s Cave.” (Bear with me.)

For those unfamiliar, Plato presented an allegory describing a group of people who have lived in a cave all their lives and only seen shadows of certain objects. To those in the cave, the shadow of the object “is” the object. When they leave the cave, they realize that the shadow is just a limited “representation” of the object, and not the object itself. If they return to the cave and try to share their knowledge, they fail, because those in the cave lack the frame of reference to understand.

Now, the conventional theory of superintelligence involves a “intelligence explosion.” The concept is simple - instead of humans continuously improving AI capabilities a little at the time, you train the AI to do it (either via some form of self-improvement or making new, better AI’s). Every time, it gets a little bit faster, making the next improvement faster, and so on. If you have quick enough cycles, that makes growth at that point exponential.

There are several arguments against this I won’t make here (a good one is difficulty; it’s very possible that the difficulty of further improvements will also scale exponentially, negating exponentially scaling capabilities). Instead, though, I’ll argue something more fundamental. How exactly do you train an AI on how to learn? What data do you give it? What goal function do you get it to optimize on? This is hard enough for just human-level intelligence, which we can at least conceptualize - now do it for superhuman intelligence, which nobody has ever seen before. The current answer is “throw more compute at it and expect that the AI just develops the capability to learn on its own,“ which seems like wishful thinking.

A lot of people thought reinforcement learning was going to do this - the canonical example is DeepMind’s AlphaGo, which studied on a vast database of human-played Go games to become the world’s best Go engine, followed by AlphaGo Zero, which created its own dataset by playing itself and promptly beat AlphaGo, followed by AlphaZero/MuZero, which diversified the concept of “game” beyond Go and became expert at chess, shogi, and Atari games. Unfortunately, that’s where it stopped; it doesn’t appear that optimizing for games doesn’t translate well to other tasks.

I think the most likely scenario for ASI, if it occurs at all, is a very slow and gradual one; we make incremental improvements over time to lots of different task-based ANI’s, make more incremental improvements to how they hook together and communicate, and that gradual agglomeration eventually gets to a point where it feels superhuman. It might be a “fake” ASI, where we’re just massively speeding up a bunch of basic tasks to give the appearance of superintelligence…but if it feels and works like one, then that’s all you need.

Step 3: Doom!

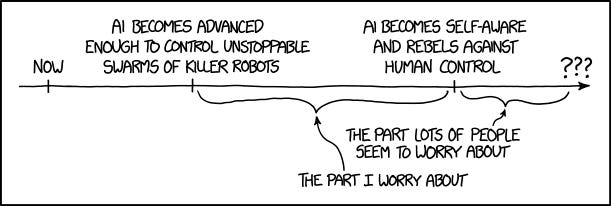

We finally get to the discussion on DOOM! Much of the discussion online revolves only around this point, which I think is mildly silly. There’s so much stuff that will happen before we get here that will completely change our thinking, so speculating doesn’t do much. It’s somewhat akin to going back in time and asking an early 20th-century doctor whether CRISPR gene therapy or CAR-T immunotherapy is a better treatment methodology for cancer.

Indulging the hypothetical, though: If we assume that the preceding problems have been solved and we now have superintelligent AI, is that an existential threat to humanity? I say yes, but only at a 10% probability (both short and long term, contingent on ASI).

There are two risky scenarios at play here. The first and most commonly discussed scenario is the “malicious AI” scenario - where an AI becomes powerful, typically via application of superintelligence, and wipes out humanity. This could be intentional (“I’ve developed a sense of self, humans are a threat, have to get them before they get me”), inadvertent (“I stopped humans from getting birth defects by ensuring they are never born”), or just a random accident (“Research Experiment 2,519,214: Stop planetary rotation, observe results”).

The point existentialists like to make here is that powerful AI’s are alien, and assuming they will have humanlike motives is foolish. I agree with that as a general concept, but I think it affects the probability distribution. Looking at bugs in computer software, the vast majority of computer bugs just cause a program to crash. Only a tiny handful keep the program running undetectably and become software vulnerabilities. I think AI alignment issues would hypothetically resolve the same way.

No, the scenario that worries me much more is not “Skynet” or “HAL-9000” but “WALL-E.” What happens if we build AI's that are so good/helpful that we end up building our society around them and just…let them run everything? Thankfully, we’ve got some time to figure that out.

Conclusion

In the end, it’s a simple calculation: ASI odds * Doom odds. 1% * 10% (short term) or 10% * 10% (long term) gives us 0.1% (1 in 1000) in the next 20 years, or 1% (1 in 100) in the next 100 years.

Okay, but what do those numbers mean, exactly? Let’s relate it to something more concrete; lifetime odds of death from certain causes.

1 in 10: Roughly the odds that a person will die from heart disease (actually a little higher than 1 in 10). Governments spend tons of money working on it. Doctors frequently discuss it with you annually to help prevent it. You likely know of someone who’s died of it.

1 in 100: Roughly the odds that a person will die in a car crash. Big deal, though not as big a deal as heart disease. Governments spend some money on it. Moderate preventative measures are in place that could be much stricter. Someone you know probably knows of someone who’s died of it.

1 in 1,000: Roughly the odds that a person will die by drowning. Mild concern for most individuals, but not something that impacts our day-to-day lives. Mild common-sense preventative measures, but very light government involvement. A tragedy when it happens, but rare enough that it’s not a big concern.

Many existentialists think we need to treat it as a 1 in 10 problem, and aggressively attack it like we would attack other 1 in 10 problems. I think that’s an overreaction. We should treat it like a 1 in 1000 problem, but one that could be a 1 in 100 problem (or worse!) We should do lots of research to determine how significant the problem is, and make sure that any proposed solutions don’t just make the problem worse.

Further Reading

There have been several other AI safety theorists who have attempted to estimate the probability of AI existential risk. Michael Aird has compiled several of them in a database, for which I am extremely thankful.

There is a full curriculum on AI Safety here with hundreds of readings.

Standard disclaimer: All views presented are those of the author and do not represent the views of the U.S. government or any of its components.

My caricature of the debate -

AI Existentialists: “Here’s a ten page article with twenty footnotes about why I’ve changed my belief of things going bad from 5% to 6%!” AI existentialist critics: “You’re stupid/a shill/part of a cult. AI will save the world! Follow me on Twitter.”

Why the super-specific numbers? Making predictions without specific numbers lets you weasel your way out later if it doesn’t pan out. I’d like to encourage not doing that.

There is a nifty rhetorical trick going on where the statement specifically uses the word “extinction,” to play to the Existentialists, but groups it with nukes and bioweapons, to play to the rest of the world. There’s a large difference between “kills a ton of people, but we learn, recover, and never do that again” and “extinguishes humanity permanently.” If we’re specifically ONLY talking about extinction and NOT “kills a ton of people,” than the grouping of AI makes sense…but the rest of the world is not going to interpret it this way.

By “significant,” I mean “can injure/kill mass amounts of people.” AI can cause other harms, but those are not new problems that should be handled by existing bodies.

Also see this reply by Luke Muehlhauser.

I enjoyed this, so thank you. Are you confident you can steel man the position taken by proponents of AI doom? Would you consider doing this at some point and expressing the places where you think it falls down?